In facing the challenges posed by quantum cryptography, the importance of human-centred design and thoughtful product development cannot be overstated. Ultimately, the value of quantum-secure systems lies not in the complexity of their mathematics, but in the confidence they inspire in users.

Introduction

Quantum computing is advancing rapidly, transitioning from theoretical frameworks to an integral component of technology strategy. No longer confined to research laboratories, quantum computers are influencing global policy, enabling innovative startup ecosystems, and prompting critical discussions concerning digital trust. A future where quantum computers possess the power to compromise contemporary encryption algorithms is becoming increasingly plausible, making cybersecurity a focal point for emerging risks.

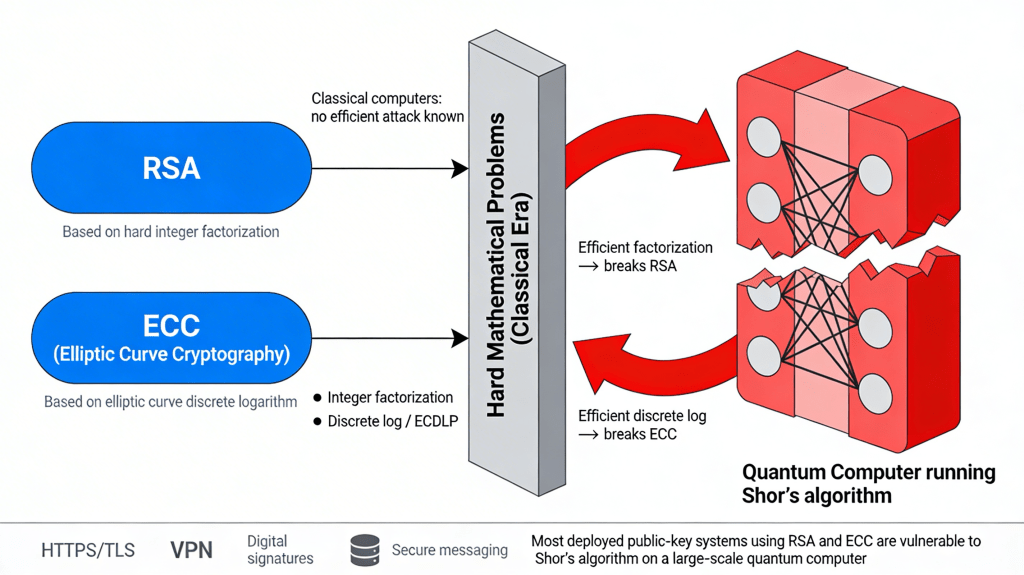

Traditional digital security has depended on computationally intensive mathematics; algorithms such as RSA and Elliptic Curve Cryptography (ECC) have protected emails, financial accounts, and government information by leveraging the limitations of classical computers in solving complex mathematical problems efficiently. The development of cryptographically relevant quantum computers (QRQC) will enable the decryption of these longstanding protective mechanisms.

The term “Y2Q,” meaning “years to quantum,” draws inspiration from the Y2K event. Unlike Y2K, which was characterized by a defined timeline and anticipated disruption, Y2Q’s arrival and impact remain uncertain. The event known as Q-day, sometimes referred to as the “Quantum Apocalypse,” will occur without warning. Consequently, data currently being collected and archived could be vulnerable to decryption when these advanced capabilities become available [1].

The Quantum Threat

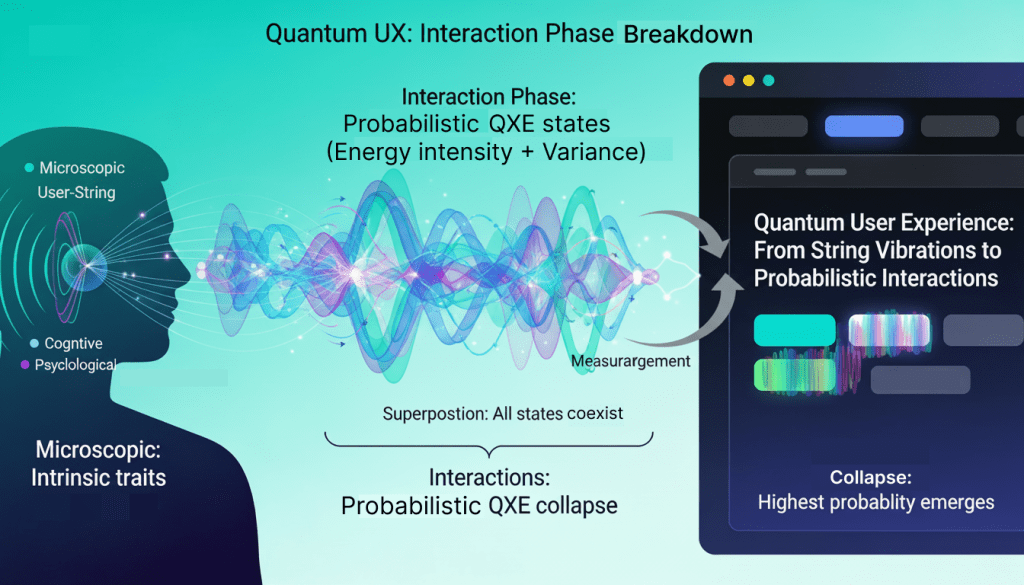

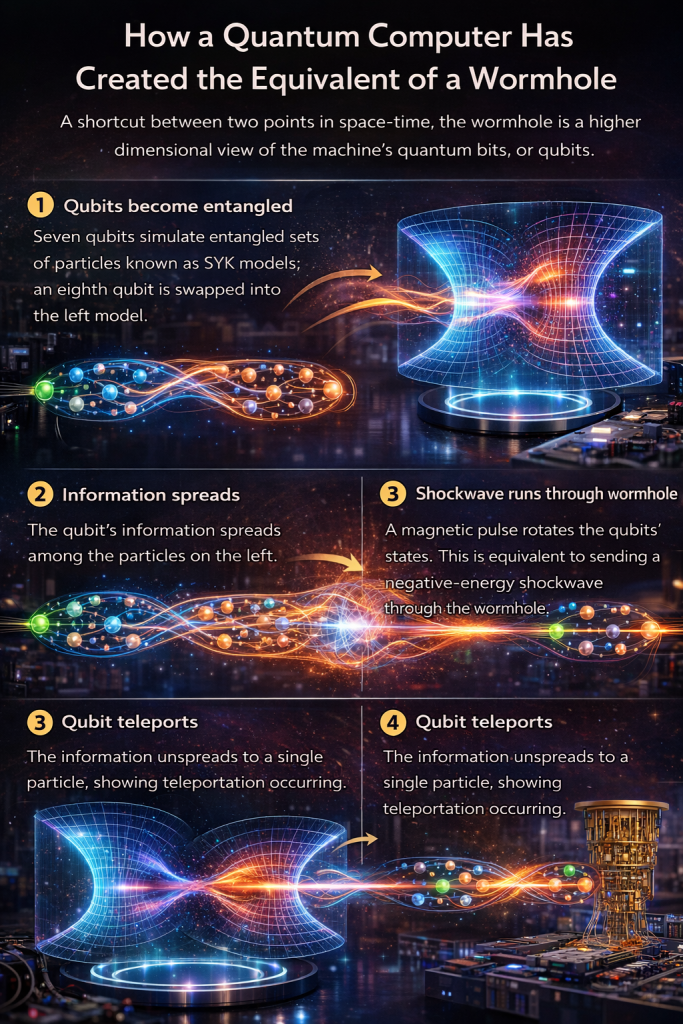

Quantum machines leverage principles such as superposition, entanglement, and interference to evaluate numerous computational pathways simultaneously. As a result, certain problems that are infeasible for classical computers may become solvable using quantum algorithms.

The mathematical foundations of RSA and ECC encryption are vulnerable to quantum computers, which can efficiently solve them through Shor’s algorithm unless reinforced by quantum cryptographic standards. Notably, Shor’s algorithm is capable of factoring large numbers exponentially faster than the best-known classical techniques, thereby threatening the security of most existing public-key cryptography once sufficiently advanced quantum hardware becomes available.

While government entities responsible for managing classified information have led initiatives in post-quantum standardization, sectors such as banking, financial services, healthcare, and intellectual property development are increasingly adopting post-quantum cryptography standards to safeguard their customers and proprietary assets.

A primary motivator for the adoption of quantum cryptography standards among both governmental and private organizations is the increasing risk of “harvest now, decrypt later” (HNDL) attacks. In these scenarios, adversaries are actively accumulating encrypted data with the intention of decrypting it in the future, once cryptographically relevant quantum computers (QRQC) become available. The potential consequences of such attacks are severe, as they threaten the confidentiality of critical financial and governmental information.

Given the substantial and accelerating investment in quantum computing, the timeline for the emergence of QRQC remains uncertain. Most organizations have yet to establish comprehensive transition strategies for migrating to post-quantum cryptography, making the duration and complexity of this shift unclear. This uncertainty incentivizes early developers of QRQC to maintain secrecy regarding their progress, given the far-reaching implications of such advancements [2].

Post-Quantum Cryptography: The Counter-Move

The U.S. National Institute of Standards and Technology (NIST) has played a pivotal role in strengthening the future of digital security by advancing the Post-Quantum Cryptography (PQC) project since its inception in 2016. This initiative was launched in response to the mounting threat posed by quantum computers to many current cryptographic techniques.

Over several years, NIST has coordinated global collaboration among cryptographers, researchers, and industry experts to rigorously analyze and benchmark candidate algorithms for their resilience against quantum attacks as well as their practicality for real-world deployment. In August 2024, NIST reached a significant milestone by releasing its principal PQC standards. These new standards define protocols for both key establishment (securely exchanging encryption keys) and digital signatures (ensuring authenticity and integrity), which are integral for secure communications and transactions in a quantum future.

Central to these standards are robust lattice-based schemes—specifically, key encapsulation and digital signature algorithms derived from the CRYSTALS‑Kyber and CRYSTALS‑Dilithium families. These schemes were selected for their strong security foundations and operational efficiency, having undergone extensive cryptanalysis and practical evaluation throughout the standardization process. In addition, NIST has approved a hash-based signature standard, which offers an alternative approach to digital signatures and demonstrates resilience to quantum attack vectors [3].

Following the publication of these landmark PQC standards, NIST has strongly recommended that organizations across all sectors proactively initiate the migration to quantum-resistant cryptography. This migration is not simply a technical update but a strategic imperative to safeguard sensitive data and critical infrastructure against future quantum-enabled threats. The process should begin with a comprehensive assessment of existing cybersecurity products, services, and communication protocols to identify where vulnerable, quantum-insecure algorithms—such as RSA or ECC—are currently deployed. Once identified, organizations must develop detailed transition plans to replace or upgrade these algorithms with NIST-approved quantum-safe alternatives.

In parallel, NIST continues its work by monitoring the performance and security of newly developed algorithms and by supporting research into additional candidates for future standardization. This ongoing evaluation helps ensure that emerging threats can be addressed promptly, and that the cryptographic landscape remains robust and adaptive as quantum computing technology advances. Ultimately, widespread adoption of PQC is essential for preserving the confidentiality, integrity, and authenticity of digital information in a post-quantum era.

PQC vs. Quantum-Native Security

It’s important to recognize the difference between post-quantum cryptography (PQC) and security methods that are inherently quantum. PQC relies on new classical algorithms designed to withstand quantum attacks, whereas quantum-native strategies leverage physical principles. A prime example is Quantum Key Distribution (QKD), which utilizes quantum physics for the secure exchange of symmetric encryption keys.

QKD operates by transmitting photons—tiny light particles—over optical fibers. In quantum mechanics, simply observing a quantum particle changes its state. When a digital bit’s value is encoded onto a single quantum particle, any attempt at eavesdropping becomes an observation, causing the system’s state to collapse. This leads to detectable errors in the bit sequence shared by the sender and receiver. If these errors are observed, the participants know that someone may have tried to access their key [4].

China has established a quantum communication network spanning thousands of kilometers and connecting cities like Beijing and Shanghai, providing QKD-based security to banks, grid operators, and government facilities. In Europe, all 27 EU member states are supporting EuroQCI, a continental initiative to build quantum-secure communications infrastructure. The United States, meanwhile, has prioritized PQC, expressing skepticism about QKD’s practicality for most applications and favouring robust PQC algorithms as the preferred approach [5].

Designing Trust in a Quantum Future

In facing the challenges posed by quantum cryptography, the importance of human-centred design and thoughtful product development cannot be overstated. Ultimately, the value of quantum-secure systems lies not in the complexity of their mathematics, but in the confidence they inspire in users. Trust is built through intuitive experiences—when people feel secure, they are more likely to embrace and rely on new technologies, regardless of their understanding of the underlying cryptographic principles.

As organizations transition to post-quantum cryptography, design must take a leading role in making these advanced protections seamless and reassuring. Thoughtful interface and product design will be essential, not only in ensuring that end users remain unaware of the underlying complexity, but also in clearly communicating the benefits and necessity of this evolution to all stakeholders.

By embedding trust and clarity into every touchpoint, design provides the roadmap for integrating quantum security into real-world applications, ensuring that the promise of quantum-safe technology is realized in ways that serve and protect everyone.