Design in the public sector has a unique power: one improvement can positively affect millions without requiring downloads, purchases, or even drawing attention to itself.

Introduction

Government digital services have a huge impact on our daily lives, much more than most private-sector products. Yet, many of these digital experiences are frustrating, they’re often difficult to use, with hard-to-find information, forms that aren’t accessible, confusing processes, outdated designs, and systems that cater more to internal needs than to people’s real-world problems.

However, things can change. When governments apply human-centered design, the results are significant. Accessible and user-friendly online government resources help strengthen relationships with citizens by providing better, more direct services that truly address public needs.

Design with Constraints, Not Against Them

Government projects must navigate an array of constraints, including legislation, privacy requirements, security protocols, and rigorous accessibility standards such as WCAG 2.1 AA or AODA. Unlike private organizations that serve specific user groups or customer bases, government services are required to address the needs of the entire population. The complexity of user requirements and the diversity of stakeholders can vary significantly according to the nature of the service provided.

These limitations are frequently perceived as obstacles; however, they function as essential guardrails. Highly inclusive, stable, and usable public services result from integrating these restrictions into the design process rather than resisting them.

Government transformation is often envisioned as dramatic system-wide change, yet substantive progress typically stems from targeted efforts to reduce friction at crucial points within the service delivery process. For example, at Ontario’s Ministry of Transportation (MTO), optimizing the completion time of a high-volume digital form by 40% led to immediate and measurable improvements for thousands of residents. Meaningful advancements in public service delivery are achieved through incremental, focused enhancements.

Collaborate Directly With Those Most Affected

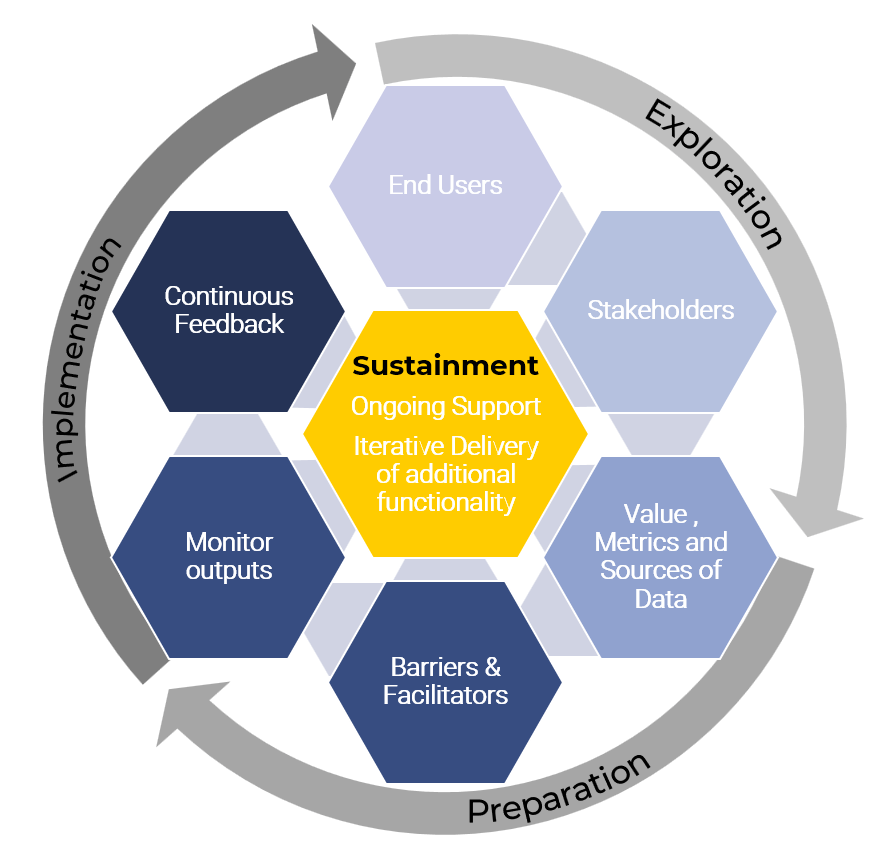

In successful project execution, valuable insights that drive innovation are seldom derived from requirements documents alone. Rather, they emerge through engagement with individuals who utilize tools routinely, as well as those assisting citizens in navigating these resources. Their firsthand experiences represent the most significant source of user experience research.

For this reason, it is imperative to conduct comprehensive user research prior to initiating any project, ensuring that all relevant stakeholders are involved in this process. While this approach may require considerable effort and coordination, and must address privacy, regulatory, and other considerations before reaching out to stakeholders, it remains a crucial step. Properly conducting user research ensures digital solutions are designed and developed to fully meet the needs of all identified stakeholders.

Accessibility Comes First

Meeting accessibility standards is fundamental to effective public service design, not just a task to complete. Genuine accessibility involves planning from the outset for users with varied needs, abilities, technologies, and circumstances.

It goes beyond legal and regulatory compliance; it is an essential principle that governments must uphold to guarantee inclusion and equal access to digital services for everyone.

For example, accessible design may include using clear language, providing alternative text for images, ensuring keyboard navigation is possible, adapting content for screen readers, and considering colour contrast for users with visual impairments.

By integrating these considerations early in development, governments can better serve people with disabilities, older adults, and others who might face barriers in accessing online resources.

Clarity Drives Government Success

Government services don’t have to be showy; they should be:

- Predictable, citizens should know what to expect at every stage, such as consistent wait times for processing applications or renewals.

- Consistent, procedures and outcomes need to remain the same regardless of region or department, so everyone receives equal treatment and support.

- Accessible, services should be usable by people with different abilities, languages, and technology access – think forms that work on mobile devices or support screen readers.

- Understandable, instructions must be clear and available in multiple languages to help users avoid confusion and reduce mistakes or delays.

- Resilient, systems should continue working in emergencies or high demand, ensuring people can get help even during natural disasters or network outages.

Whether people are renewing a license, applying for benefits, or filing a report, clear and straightforward processes are far more important than creating “delightful interactions.” For example, an easily navigable online portal and step-by-step checklists matter more to most users than flashy graphics or animations.

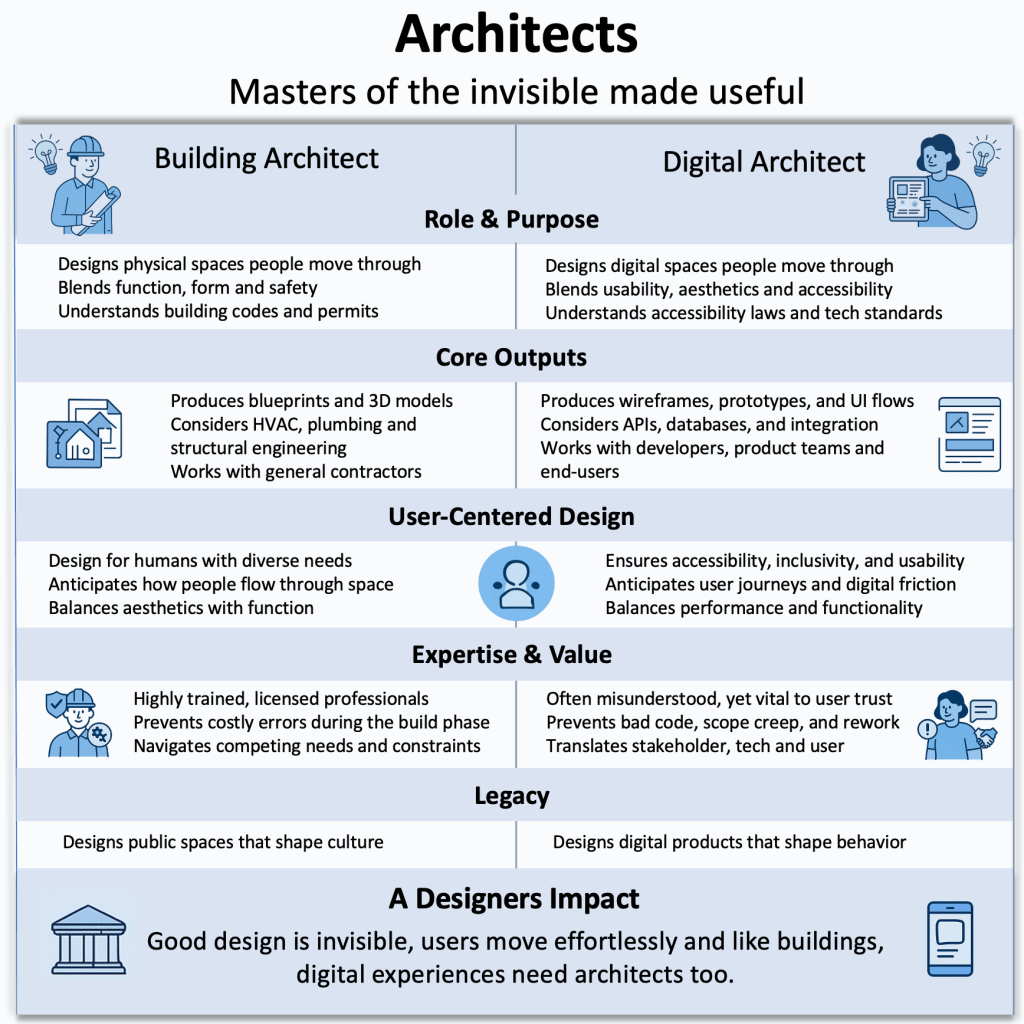

Effective Government Design Is Unseen

Effective design is often invisible, not because it lacks importance, but because it eliminates obstacles that once seemed unavoidable. For instance, automatic data validation can prevent common entry errors, and pre-populated fields can make long forms easier to complete, quietly streamlining tasks that would otherwise frustrate users.

Design in the public sector has a unique power: one improvement can positively affect millions without requiring downloads, purchases, or even drawing attention to itself. Updating a government website to simplify navigation or making forms shorter could save citizens countless hours collectively, all without any need to advertise the change.